Quantum is coming. Maybe not today, maybe not tomorrow, but soon enough*. Within 10 to 12 years, we’re told, special-purpose quantum systems (see related story: Hyperion on the Emergence of the Quantum Computing Ecosystem) will enter the commercial realm. Assuming this happens, we can also assume that quantum will, over extended time, become increasingly general purpose as it delivers mind-blowing power.

Here’s the quantum computing dichotomy: even as quantum evolves toward commercial availability, very few of us in the technology industry have the slightest idea what it is. But it turns out there’s a perfectly good reason for this. As you’ll see, quantum (referred to as the “science of the very small”) is based on a non-human, non-Newtonian stratum of earthly existence, which means it does things, and acts in accordance with certain laws, for which we humans have no frame of reference.

Realizing why quantum is so alien can be liberating. It frees us from the gnawing worry that we’re not smart enough to ever understand it. It also means we can stop trying to fake it when quantum comes up in conversation. Speaking as a confirmed “Newtonian caveman” (see below), this writer asserts that at least the thinnest, outermost layer of quantum may not be as incomprehensible as we suppose. It might be a good idea if all of us were to make a late New Year’s Resolution to take a fresh stab at grasping quantum’s basic principles.

To help in this process, below are remarks delivered this week at the Rice University Oil & Gas HPC Conference in Houston by Kevin Kissell, technical director in Google’s Office of the CTO. In an interview last year, Kissell told us that while he works with Google’s quantum computing R&D group, he is by background a systems architect; his role with the quantum group is to advise his colleagues on assembling the technology into usable form.

“I’m not really a quantum guy,” he told us at SC17, “though I do read quantum physics textbooks in my spare time.”

Oh, ok.

If you’ve never been to a Kevin Kissell presentation at an industry conference, make a point of it at your next opportunity. It’s appointment viewing. The profusion of technical and scientific knowledge that pours forth, colored by humor, energy and intelligence, is something to see. A tech enthusiast, Kissell gives you the sense that he can’t get his thoughts and words out fast enough. He put on such a performance at the Houston conference, taking on the Herculean task of explaining quantum computing to the rest of us. To Kissell’s great credit, he did it with the empathy of a natural teacher who understands where comprehension stops and mystification begins.

Below is an excerpt of his remarks:

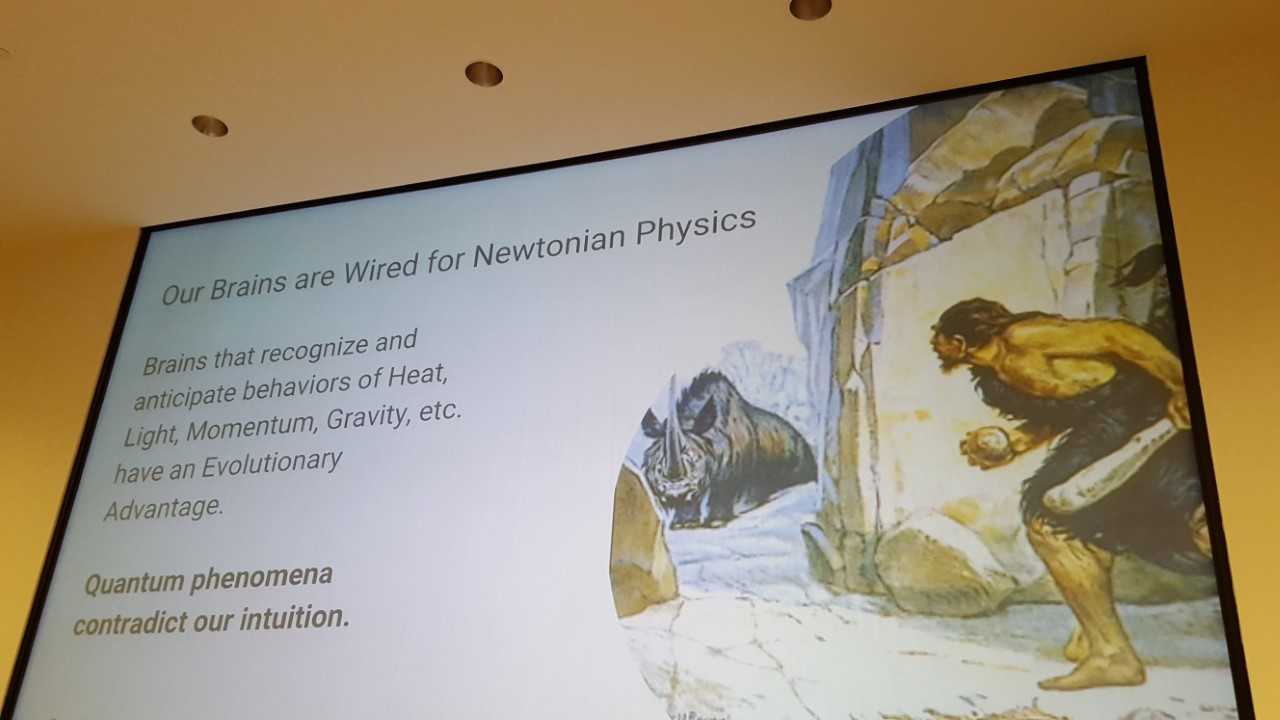

Google has been working on quantum computing for a while, and it’s really hard to explain to people sometimes. And it’s my belief that this is because our brains are not wired for it. There’s an evolutionary advantage in having a brain that understands Newtonian mechanics. Which is to say that when I throw a rock, it’s going to follow a parabola. Now it took us 10,000-20,000 years to be able to define a parabola mathematically. But the intuition that it’s going to start dropping – and dropping at an accelerated rate, because that’s what gravity does – that’s pretty instinctive because that’s a survival thing. But with quantum mechanics, there’s no reason why our brain needs to wrap itself around quantum mechanics in the same way, and in part this is because it contradicts intuition.

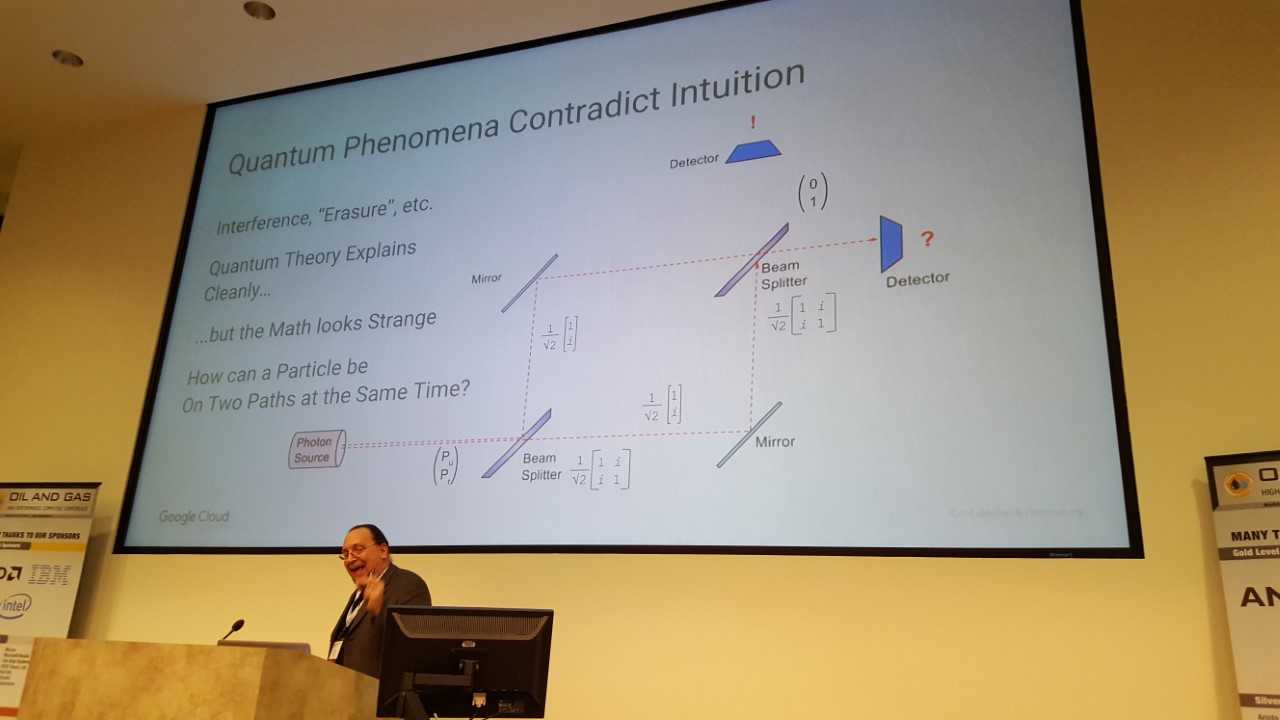

One of the classic examples that I found quite helpful in understanding this stuff is the classic demo that you can do it with a laser; the classic model is having a controlled source of individual photons, you fire photons in a beam splitter, you have a couple of mirrors, you have another beam splitter and you have a couple of detectors.

Now my Newtonian caveman brain tells me what should be happening is that a statistically equal number of photons should be hitting on either detector. But that’s not what happens. Because photons ain’t Newtonian things, they’re quantum things. And if you accept this just on faith – because I couldn’t derive this personally – that a beam splitter can be modeled as that matrix (see image) and that the path on which the photon is traveling can be thought of as a vector of a couple of probabilities, then I multiply that probability vector by the beam splitter, that gives me a couple of other resulting matrices, and then I run those matrices into the second beam splitter. The result I get is that the probability of it going into the upper target is zero and the probability that it goes to the target on the right becomes one. That seems strange, and the math only works if it is mathematically, at least, possible that the photon is on both paths at the same time.

This hurts our brains, but this seems to be the way the universe works at a microscopic level.

And so taking this…, if I think of my element of data as a quantum bit – or a qubit – it’s not something that I can represent as an on/off thing. In fact the usual graphical representation is a point on a sphere. So you can represent that point on a sphere as an X-Y-Z coordinates, or I can represent it as a pair of angles relative to the baseis. Typically, it’s done with angles. It hurts my eyes to read it, but that’s the way it’s done.

What’s cute about this is that with a normal bit, it’s 0 or 1…. (But) the quantum bit actually just has that photon which is on both paths at the same time. So this qubit is in a certain sense both 0 and 1 at the same time. It’s got a couple of values that are superimposed on it.

That’s kind of cool, but what is cooler is that if I’ve got two qubits then the vector spaces just sort of blossom. If I have two bits, I can express a value and I have four options that I can express. But if I have two qubits I can express four values at the same time. And that’s the power of it. It’s just exponentially more expressive, if you can actually master it.

So if I have 50 qubits, that state space is actually up there with a very large (Department of Energy) machine. I don’t know if it’s up there with an exascale machine, but it’s getting way up there. If I have 300 qubits, in principle I can represent and manipulate more states than there are atoms in the universe.

And, very conveniently, if I have 333 qubits I can represent a Google for it. (audience laughs) I’m not saying that that’s our design goal, but I’ll be very surprised if we don’t do at least a few runs with a 333-qubit machine… (more laughs)…