Apple does more than many companies to champion the idea of augmented reality, but it needs to do a better job showing why we should share that vision. Lately, Google has been taking up the slack.

Among other things, Google has shown us how to use AR in Google Maps to get a clear idea of where we need to go. It’s shown us how we can see if clothes match our wardrobe, and how we can use phone cameras to read signs in other languages.

As for Apple? Its AR announcements tend to focus on third-party novelty and educational apps, such as a recent one that lets you go on virtual tours of the Statue of Liberty. Lazy Sunday stuff, in other words. Unlike Google, Apple hasn’t been showing us how AR could be something we turn to in the hectic moments of everyday life. I worried about this last year, and I haven’t seen much aside from rumors that convince me the situation is any better now.

Apple still has the potential to be the AR leader, and I doubt that leadership will look anything like Google’s data-driven approach. Google is untouchable in that regard. Apple can, however, do a better job of showing us how AR can enhance our lives without invasive expeditions into our data. It can show us how AR can be useful: a tool as well as a toy. Apple can certainly make AR look cool, if anyone can. These are the best ideas for making that turnaround a reality.

Put ‘TrueDepth’-like 3D sensors on the rear iPhone camera

If Apple wants to leap in the right direction, it should include something like the TrueDepth sensor on the rear camera. The machine-learning model used by Apple’s ARKit on modern iPhones just isn’t accurate enough. Google attempted to do something similar with its Project Tango in 2014, but it was hampered by the scattered nature of the pre-Pixel Android landscape. By 2017, though, reliable analyst Ming-Chi Kuo predicted we could see an iPhone with such a 3D sensor shipping this year. (At this point, it’s safe to assume we’ll probably see it in a future year—if, of course, it exists at all.)

Leif Johnson/IDG

Leif Johnson/IDGIt’s ridiculously hard to use the TrueDepth sensor when you can’t see what you’re scanning.

All rumors suggest that such a sensor wouldn’t work exactly like TrueDepth, but the end result would be much the same. TrueDepth works by spraying your face with 30,000 lasers and measuring the distance, while this new method would count the time it takes for lasers to bounce back from the objects they hit. If it works as intended, it’ll significantly boost accuracy, which should in turn boost popularity. Developers would have more reason to experiment, as they wouldn’t feel as limited by the current model’s confusion in low light as it struggles to tell two differently colored objects apart.

We’ve already seen that TrueDepth works so well that some developers are using it for rudimentary 3D scanners, and I’d love to see what they’re capable of when similar technology makes it to the rear lenses.

Further incorporate AR into FaceTime and smaller features

People frequently say that AR won’t take off until Apple makes good AR glasses, but those people overlook the importance of helpful smaller features on our phones. So many apps seem made for glasses because they force you to keep the app and camera running for battery-draining minutes at a time, whether it’s the Statue of Liberty model or a game like Smash Tanks.

We need more apps that think “smaller.” We need these apps to be useful. Apple’s Measure app is a great example of a helpful AR app that doesn’t need to stay open for more than a few seconds, and it and other apps will only get more accurate and useful once the iPhone sports a proper 3D sensor like the one described above. They’re novelties now, but one day they could be necessities.

In the best scenarios, we wouldn’t even have to open extra apps to experience the wonders of AR. It’d be right there in the camera. (Indeed, Google already does this with Google Lens on Pixel phones.) Again, Apple doesn’t have Google’s data prowess, but it could distinguish itself by letting us further incorporate AR into FaceTime conversations. Apple already hints at this by letting us use Memoji and Animoji in FaceTime with the front TrueDepth sensors, but more interesting is the kind of rear-camera interactivity found in apps like Vuforia Chalk.

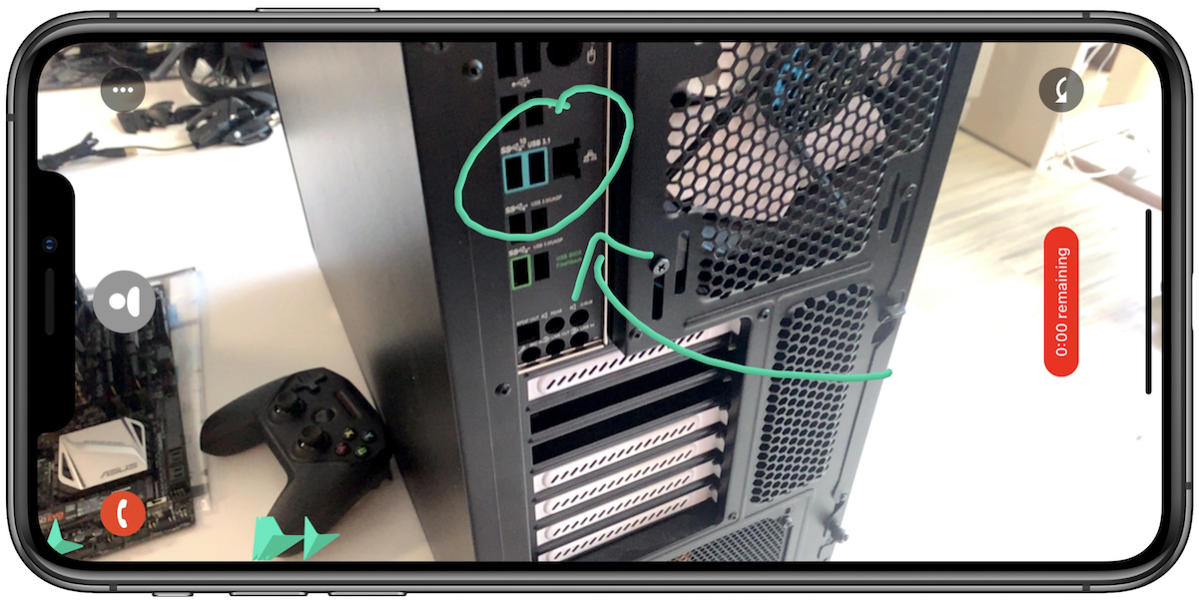

Leif Johnson

Leif JohnsonNo more, “No, not that port, the other one! The other other one!”

In this app, two people in a conversation can view each other’s rear camera feeds and then draw around specific onscreen objects onscreen. Each person’s lines show up as different colors, so it’s fantastic for helping Dad find the HDMI port or the right box on a grocery store shelf from thousands of miles away, as the circles and arrows stay in place even if the camera moves. It’s a bit wonky right now, but that will change with a proper rear 3D sensor.

It’s such a seemingly small feature and it may not even be used on the majority of FaceTime calls, but people would be grateful to have the option when they need it. In time, as Cook said in 2016, we might find ourselves wondering “how we ever lived without it.”

Make stylish, affordable AR glasses

Good AR needs to start on the iPhone, but Apple needs a headset for more involved AR experiences. If Apple manages to produce a pair of AR glasses that are portable, stylish, and (relatively) affordable, it could be looking at its first true “game-changer” in years.

Rumors suggest Apple already has such devices in the works. The first, as reported by Cnet last year, is an AR/VR headset that’s codenamed T288 that’ll supposedly come out next year. That seems wildly optimistic, though, in part because this space-age device will support a whopping resolution of 8K in each eye and a mega-powerful 5nm custom Apple processor.

The other device is a pair of AR-only glasses, as analyst Ming-Chu Kuo predicted last March. Much as with the original Apple Watch, the iPhone would handle the bulk of the processing, which in this case would include wireless networking and global positioning. The glasses themselves would only handle the orientation and display. Kuo claims Apple will start making these devices next year and ship them sometime in 2020.

The AR/VR headset sounds cool, but I’d place my bets on the glasses being the revolutionary “one more thing” gadget. It’s hard to overemphasize the important of making an AR headset look cool before the public embraces one fully, and tethering could be the way Apple pulls that off. Tethering would help with battery life and the weight, but most importantly, it would allow Apple to make such glasses look a little different from regular sunglasses, especially if it manages to hide the sensors in the lenses or the rims. That’d be a big improvement over devices like the Google Glass, which infamously made people look like nerdy creepers.

USPTO

USPTOSpeaking of nerds, here’s a concept from one of the patents for Apple’s headset.

Compared to “real” AR headsets, it could be revolutionary. Apple’s chief competition would be the Microsoft HoloLens 2, a clunky $3,500 rig that makes you look like one of the rebel air traffic controllers from Star Wars. Its battery only lasts for 2.5 hours. There’s also the less-established Magic Leap, which makes you look like a giant bug. Both devices are aimed both at commercial enterprises and developers rather than consumers.

It’s hard not to love the “dream” behind devices like the HoloLens. Its multiple cameras scan the space in front of you and it’s able to interpret gestures without the need for additional controllers. It could be great for teaching someone how to use industrial equipment on the fly, as astronaut Scott Kelly showed when he used a HoloLens to facilitate operations in the International Space Station. Architects are already using it to see how their structures look “in person.”

The consumer uses for an Apple device are boundless. You could use such a device to read more information while viewing works of art in museums, or you might be able to use it to identify flowers on a weekend hike. You could have an AI pet, if you wished. It’d make us excited about technology again (and, for privacy, it probably wouldn’t hurt if Apple kept proper camera off it and let 3D sensors do most of the imaging). And the time is ripe for Apple to work its magic and refine this technology, much as it did with the iPhone when BlackBerry and Palm were kings.

A word of caution: If you thought people hated you for wearing AirPods on the sidewalks, just wait until they see you wearing a pair of these.

Own the AR gaming space

I firmly believe AR won’t take off until Apple shows us how we can use it for mundane tasks, but gaming has its place, too. At the moment, though, AR gaming is a joke. It’s hard to point to 10 all-AR games that are truly worth playing. Plenty of games have neat AR options, but they tend to be the kind of thing you smile at for a few seconds before going back to playing the normal (and faster) way.

Apple is in a position to change all that, but we probably won’t see it until Apple releases glasses like the ones described above. They’re the key ingredient missing in so many promising AR apps. In AR Runner, for instance, you have to hold the phone while jogging so you can see the “course” before you. Meh. In Pokémon Go, you have to awkwardly move your phone around if you want to scout for Pokémon in the digital grass. Yawn. These activities tend to be tiring, especially when you end up holding up your phone for several minutes in a game like AR Dragon.

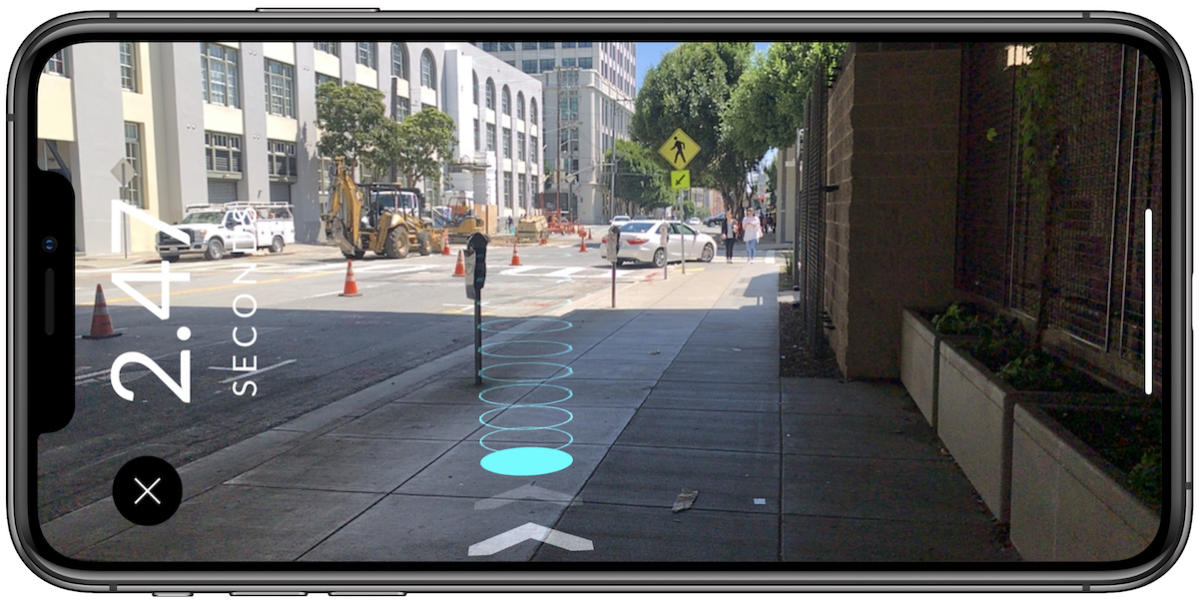

Leif Johnson

Leif JohnsonHonestly, you’d probably need AR contacts for AR Runner to look cool.

Lightweight AR glasses could make these apps seem as magical as their developers want them to be, and Apple itself may help make these others ones happen. It’s already proven that it’s willing to partly fund creative “normal” games through Apple Arcade, so there’s no reason to believe it wouldn’t do the same with augmented reality. If it combines this exclusive approach with good hardware, it could take competitors years to catch up. Apple competitors are having a hard time producing anything as wonderful as Face ID as it is.

Optimize AR for batteries

All this brings us to what’s probably at once the dullest and essential ingredient for Apple’s dominance: It needs to optimize AR. As it is, especially on the iPhone, augmented reality usually isn’t worth the trouble. It’s often slow to start, it’s imprecise, it’s gimmicky. There’s little reason to use it when non-AR methods remain both more accurate and faster.

More importantly, AR drains your battery as though it’s pouring water from a cup, as anyone who plays Pokémon Go can attest. Few if any other activities place such a strain on a phone, as robust AR apps juggle the camera, the motion sensors, the GPS, CPU, GPU, and network tools all at once. Games like The Machines look great when Apple shows them on stage, but all those special effects will leave you reaching for a battery pack by the end of a couple of games. Nor is this just a problem with the iPhone. As I said above, the Microsoft HoloLens 2 only manages a mere two hours of battery life, and the Magic Leap only reaches three.

I’m excited when I hear that Apple may offload a lot of the processing for its AR glasses to the iPhone because that means the headset won’t look like garbage, but I doubt it’ll do a lot to relieve the strain on the phone’s battery. On the other hand, if, in the near future, Apple emphasizes relatively brief and minor iPhone-based undertakings such as Measure or “interactive FaceTime,” it may not have to worry about those issues much until it’s ready to tackle them.

The harsh reality

Here’s a caveat to all this: Tim Cook has repeatedly said that AR isn’t ready for prime time.

“The field of view, the quality of the display itself, it’s not there yet,” he told the The Independent in 2017, and much of that remains true today. Even though it’s exciting to speculate about all this stuff, the truth is that we may have to wait a while before Tim Cook’s dream augments our reality, predictions from analysts such as Ming-Chi Kuo aside.

I also believe it’ll take longer to get there if we think of an AR-based future as some kind of war between the approaches of Apple and Google. The best outcome would likely see their efforts working in tandem. Apple could wow us with precise and attractive hardware, whether it be the glasses or at least impressive rear 3D sensors on the iPhone. Google could deliver its data-driven goodies through Google Maps or Google Translate, which we could use on Apple’s hardware through Google’s own app. We’d all win. But of course I know it won’t be as simple as that.

I do know, though, that I’m excited to see whatever form it takes. I just want Apple to take its time and wait to announce whatever technology it comes with until it’s ready for the stage. On some level, I’m worried about a cancellation fiasco, such as we saw with AirPower. But if it releases a dud of an AR headset, which makes about as many waves as the HomePod after all these years of experimentation and rumor?

That would be even worse.